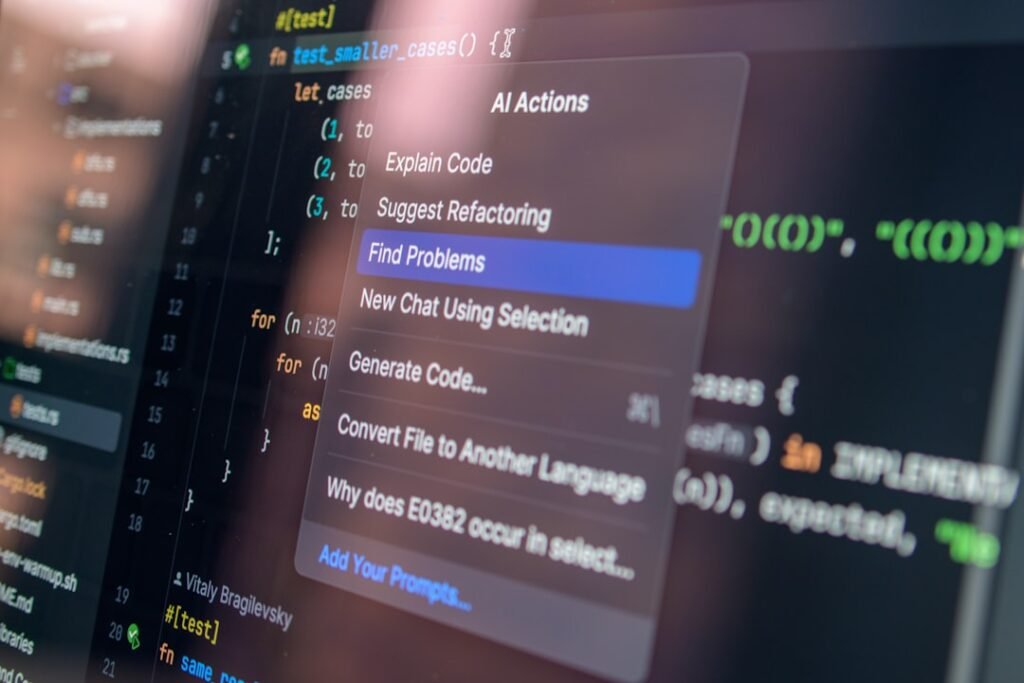

AI-generated code can speed up development, but it should not bypass code review. If you need the wider context, start with AI coding agents safely. This guide focuses on reviewing AI-written code before it ships, with practical controls that a UK team can use before the next tool, supplier or incident forces the issue.

Generated code can be plausible, tidy and insecure at the same time. It may mishandle authentication, validation, permissions, secrets or error cases. The answer is not panic and it is not blind adoption. The answer is a clear boundary: what is allowed, who owns it, what must be checked, and how the team will know if something goes wrong.

Why AI-generated code review matters now

AI coding tools are now used by non-developers, freelancers, agencies and product teams, including in WordPress and small business projects. This is why the topic should sit in normal business planning rather than being treated as a side project. Security works best when the control is built into the workflow, not added after staff have already found their own shortcuts.

The most useful external reference for AI-generated code review is OpenAI: Codex Security. Read it as a baseline, then compare it with the exact systems, data and decisions your team handles.

The question is not whether AI wrote the code. The question is whether the team can prove the code is safe enough to ship.

The risk in plain English

The risk is that a confident patch reaches production without understanding, testing or security review. Most failures are not caused by one dramatic mistake. They are caused by small permissions, old assumptions and unclear review points connecting together. A safe process breaks that chain before one weak point becomes a business problem.

- Missing input validation.

- Broken access control.

- Unsafe dependency changes.

- Secret exposure.

- SQL or command injection.

- Weak error handling.

What good looks like

Good practice for AI-generated code review should be easy to recognise in daily work. People should know the rule, the owner should be able to show the setting or record, and the team should understand what to do if the control fails.

| Area | Weak setup | Safer setup |

|---|---|---|

| Authentication | Assumes user is trusted | Check roles and sessions |

| Data handling | Stores too much data | Minimise and sanitise |

| Dependencies | Adds package casually | Review source and maintenance |

A practical checklist

Use the checklist below as the first working version for AI-generated code review. Review it when the tool, supplier, workflow or risk level changes.

- Read the diff line by line.

- Run tests and linting.

- Check authentication and authorisation.

- Search for secrets.

- Review dependency changes.

- Test failure cases.

- Use a pull request even for small changes.

How to roll this out without slowing the team down

For AI-generated code review, begin with the workflow where a mistake would hurt most. One completed improvement in that place is more useful than a broad plan that nobody owns.

- Name an owner for AI-generated code review.

- List the tools, accounts, data or workflows involved.

- Decide what is allowed, blocked and approval-only.

- Make the rule easy to find and easy to follow.

- Add a review date and a reporting route for problems.

- Update related posts, policies or checklists when the process changes.

Common mistakes

The mistakes below are common around AI-generated code review. They become easier to fix once the team knows who should notice them and what the next action should be.

- Trusting code because it works once.

- Skipping review for “simple” changes.

- Letting AI add dependencies without review.

- Ignoring security-sensitive paths.

Internal links and next steps

Code review connects developer security, AI agent safety and business continuity. For a broader control set, read AI agent security at work and small business cybersecurity checklist. If the topic touches personal data, also connect it to personal data sharing and privacy basics.

Questions people usually ask

Can AI-generated code be secure?

Yes, but only after the same or stronger review than human-written code.

What should non-developers avoid?

Deploying generated code directly to production without a developer or security review.

Should AI code be labelled?

Teams should at least make reviewers aware when a significant change was AI-assisted.

Final recommendation

Treat AI-generated code as a draft that needs tests, review and context before production. Write down the rule, test it against a real example, and improve it after the first review. Good security is not a perfect document. It is a repeatable behaviour that survives busy days.

Keep the review boring and consistent

The strongest review process is predictable. Check the diff, run tests, inspect dependency changes, review security-sensitive logic and confirm rollback options. AI can speed up drafting, but the final decision to ship should still rest on evidence the team understands.

A realistic workplace example

An AI-generated patch fixes a visible bug but also changes validation logic. The page works in a browser, yet the security-sensitive path is now weaker. This is why code review has to inspect assumptions, not only outcomes.

What to monitor

Monitoring AI-generated code review should stay simple. Pick a few signals that reveal whether the control is being followed, ignored or stretched beyond its original purpose.

- Authentication and authorisation changes

- Input handling

- Database queries

- New packages or generated helper functions

A 30-day improvement plan

Improve AI-generated code review in short cycles. Complete one action, record what changed, then use that evidence to decide the next step.

- Require human review for security-sensitive files

- Run automated tests

- Search for secrets

- Test failure paths before deployment

Why this should stay practical

A good review asks what changed, why it changed and what could break if the generated assumption is wrong.

The strongest control for AI-generated code review is the one people can follow during normal work. If the safe route is clear, quick and visible, it is more likely to become the default.

Decision rules for this topic

For generated code, the decision rule is evidence before deployment. Working once in a browser is not enough.

- Review generated code for assumptions, not only syntax.

- Check authentication, authorisation and data handling before visual polish.

- Run tests before merging AI-assisted changes.

Who should be involved

A developer should review logic, while a second reviewer checks security-sensitive areas when the patch touches users, data or payments.

When to revisit the guidance

Revisit the review process after bugs, rollbacks, dependency surprises or security findings in generated code.

Security-sensitive code deserves extra attention

Some files deserve more careful review than others. Authentication, authorisation, payment handling, form processing, database queries, file upload, email sending and admin settings are all areas where a small generated change can create a large vulnerability. Mark these areas clearly in the review process.

When the change touches one of those paths, ask for tests or a second reviewer. This is not mistrust of AI; it is normal engineering discipline applied to a faster drafting tool.

Where possible, keep AI-generated changes small. A narrow patch is easier to understand, test and revert. Large generated rewrites may look efficient, but they often make review harder and hide security-relevant decisions inside a broad diff.